Full Citation

Baker, R. S., & Hawn, A. (2022). Algorithmic Bias in Education. International Journal of Artificial Intelligence in Education, 32(4), 1052-1092.

Plus: National Education Policy Center. (2022). Time for a Pause: Without Effective Public Oversight, AI in Schools Will Do More Harm Than Good.

Publication Type

Policy brief with research review

What They Studied

Analysis of AI and algorithmic systems being introduced in education, examining evidence for effectiveness, bias concerns, and oversight needs.

Key Findings

AI in education is being adopted without adequate evidence:

- Claims about “personalised learning” are largely unsupported

- Most AI EdTech products haven’t been rigorously evaluated

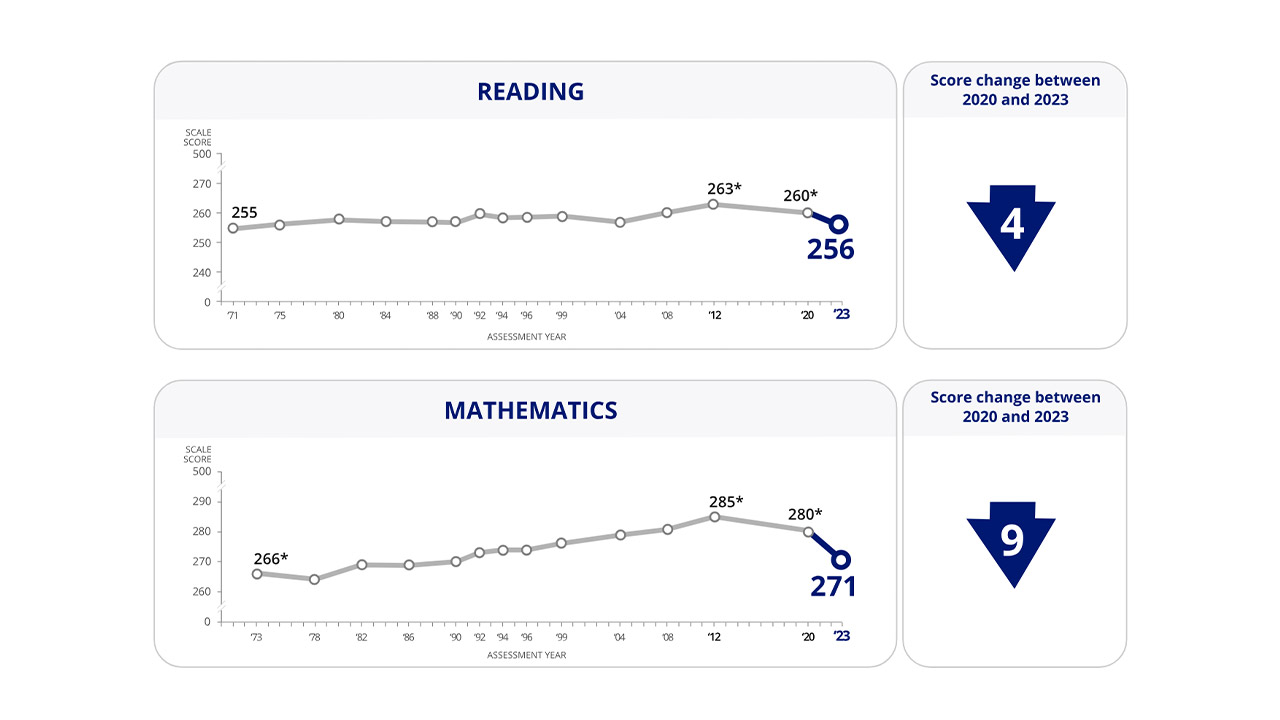

- Short-term gains (usually in narrow test score metrics) don’t reflect genuine learning

Bias and fairness concerns:

- AI systems can perpetuate and amplify existing inequalities

- Algorithmic bias affects minority and disadvantaged students disproportionately

- “Objective” algorithms often encode subjective human biases

- Students have little recourse when algorithms make errors

Lack of oversight:

- Schools lack expertise to evaluate AI products

- No meaningful standards for AI EdTech claims

- Privacy protections are inadequate

- Long-term impacts are unknown

The report’s recommendation:

“Without effective public oversight, AI in schools will do more harm than good. We need a pause on AI adoption until proper safeguards are in place.”

Why This Matters for Schools

The UK government and many schools are enthusiastically adopting AI tools, but this research warns we’re moving too fast without:

- Evidence of benefit

- Understanding of risks

- Adequate safeguards

- Proper evaluation capacity

Examples of AI in UK schools:

- Century Tech (adaptive learning platform)

- Various AI homework helpers

- Automated essay scoring

- AI-generated teaching materials

- Chatbot “tutors”

Most of these have not been rigorously evaluated for effectiveness or bias.

What Parents Should Know

If your child’s school is using AI tools, ask:

- What evidence is there that this AI system improves learning?

- Has it been tested for bias?

- What data does it collect about my child?

- Can I see the algorithm’s recommendations for my child?

- What happens if the AI makes an error?

Be particularly concerned about:

- AI systems that make high-stakes decisions (course placement, intervention targeting)

- Black-box algorithms where neither teachers nor parents can see how decisions are made

- Claims of “personalisation” without evidence

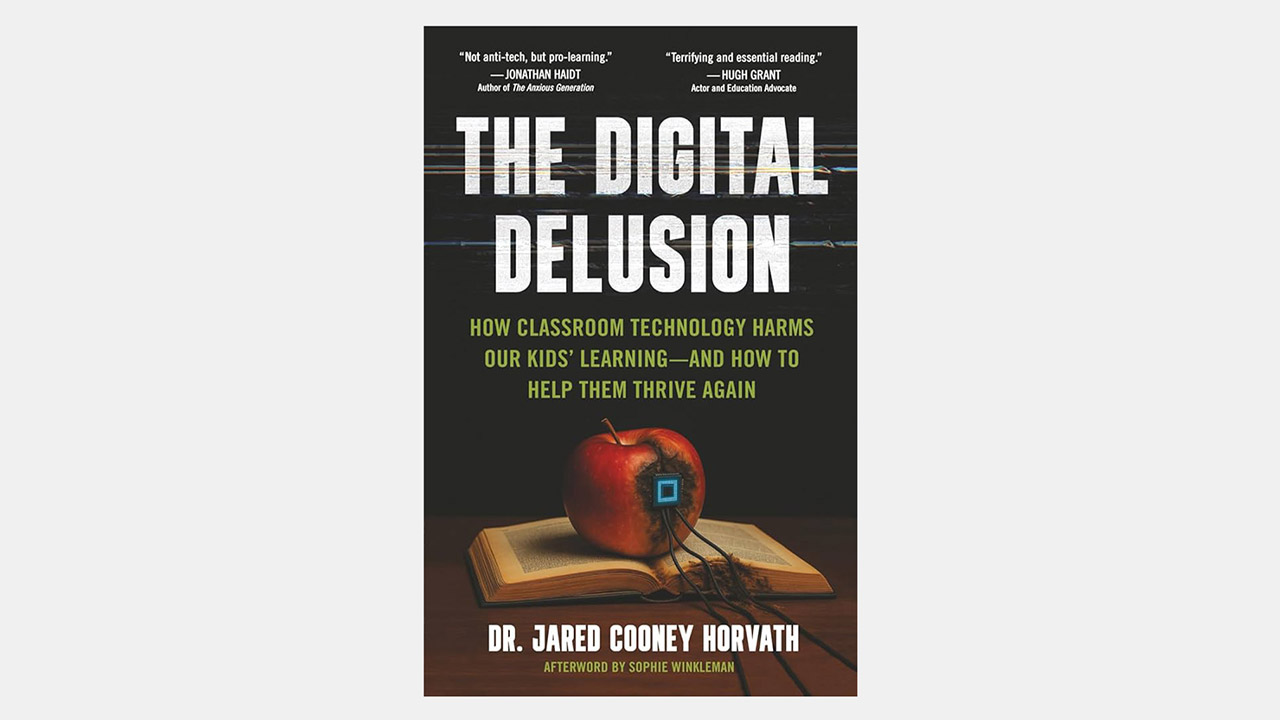

The Broader Context

This report is part of growing international concern about AI in education:

- South Korea has pulled back on AI textbooks (2025)

- Multiple countries are calling for AI regulation in schools

- Academic researchers are raising serious concerns

- UNESCO has urged caution

Access the Research

[Research on algorithmic bias in education – various sources]